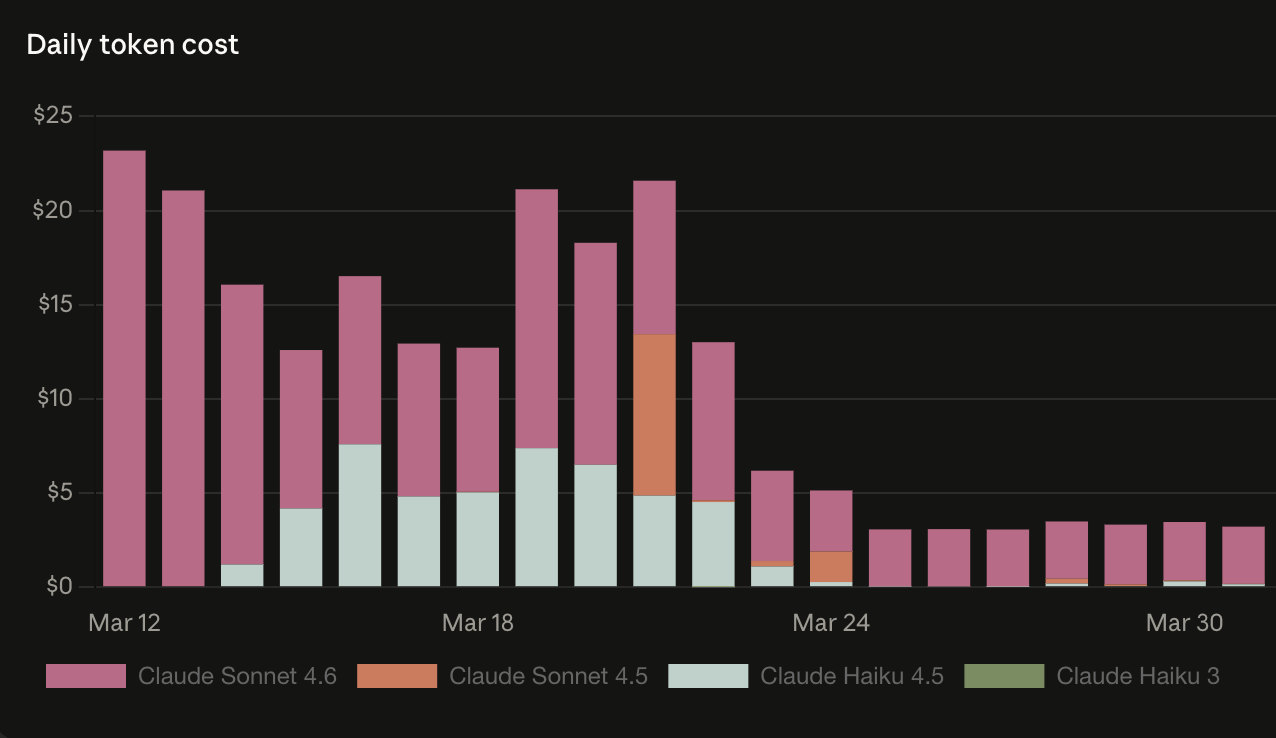

In Part 2, I predicted the n8n rebuild would cost $44-70/month.

I was wrong.

The actual number came in at about $90 - not including hosting. Which is still significantly cheaper than the $330 I was burning on OpenClaw, so the argument holds. But I said I’d report real numbers, and $90 isn’t $70. Worth naming.

What 30 Days Actually Looked Like

The build was harder than I expected. OpenClaw’s pitch is that you’re up and running fast - and that’s real. A few days from design to a working pipeline. The n8n version took roughly twice as long. More planning, more wiring, more decisions about how to structure state across workflows.

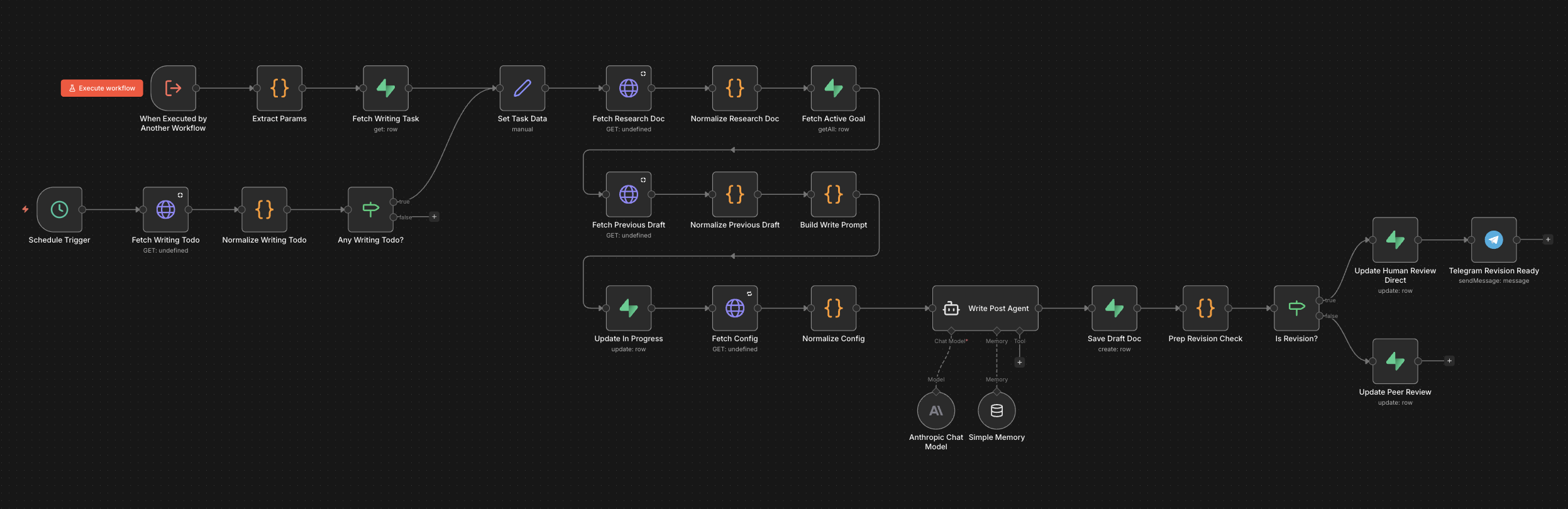

And the early days were messy. Day-to-day issues while I was still building and running at the same time. That part wasn’t pretty. The thing ended up as 9 sub-workflows by the time it was done - research, writing, review, approval, publishing, the Telegram layer, and a few utility flows holding it together. More surface area than I originally planned for.

The difference that actually mattered: problems solved stay solved.

With OpenClaw, I was patching the same failure modes on a rotating cycle. New guardrails, new drift, new issues. The researcher would stabilize for a few days then find a new way to silently fail. The AGENT.md rewrites never really stuck.

With n8n, the issues I fixed in week one didn’t come back in week three. The stability curve actually went down. Now it’s running consistently enough that I’ve started adding features instead of chasing bugs - which is what “stable automation” is supposed to feel like.

The Telegram Agent: 80% of Oracle

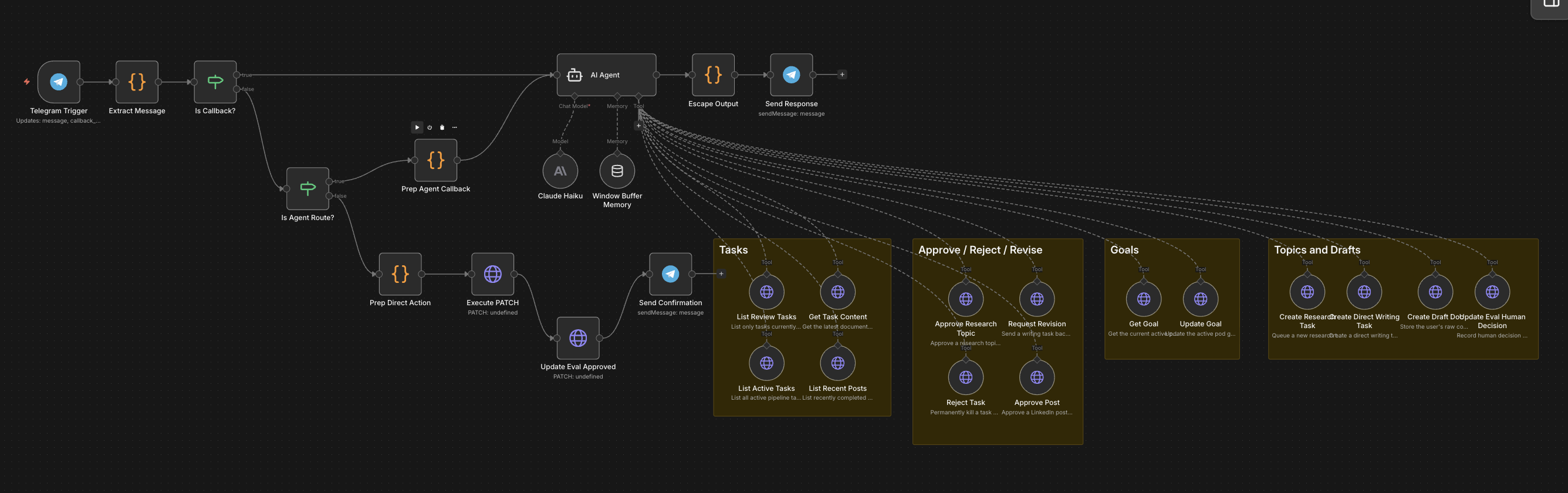

I said in Part 2 that I rebuilt the conversational interface - a Telegram chat agent that could surface pipeline state and accept approvals - scoped correctly this time. No orchestration responsibility. Just coordination.

Honest assessment: it’s about 80% as good as Oracle.

What I kept: I still see the pipeline conversationally. I get notified when new research topics come in, when posts need my approval, when something actually gets published. I can suggest topics and add revision notes to drafts in plain English. That’s most of what made the OpenClaw setup feel like the future.

What I lost: the ability to ask the system why something went wrong and have it actually look inward. Oracle could describe its own internal state when something was off. The n8n chat agent reads the pipeline. It doesn’t diagnose it.

That’s a real trade-off. Not a dealbreaker - but if you valued that introspective quality in agent-native systems, know that it doesn’t survive the rebuild cleanly.

The Honest Part About Creative Quality

The n8n version required more prompt work to get workable posts. Didn’t fully see this one coming.

More system prompt tweaking, more iteration on the writer instructions, more time dialing in the voice. The OpenClaw version felt more natural to train - and I think I know why.

The agent souls thing.

I named those agents for a reason. Oracle, Tank, Neo, Smith - each had a defined personality and operating style. The researcher had a specific lens. The writer had a specific mode. The reviewer pushed back from a different angle by design. I think that actually contributed something real to the output. Whatever that combination produced, it seemed to develop a better feel for what I’d consider a good post. Faster, with less hand-holding.

Replicating that in n8n took work. Not impossible, just more deliberate.

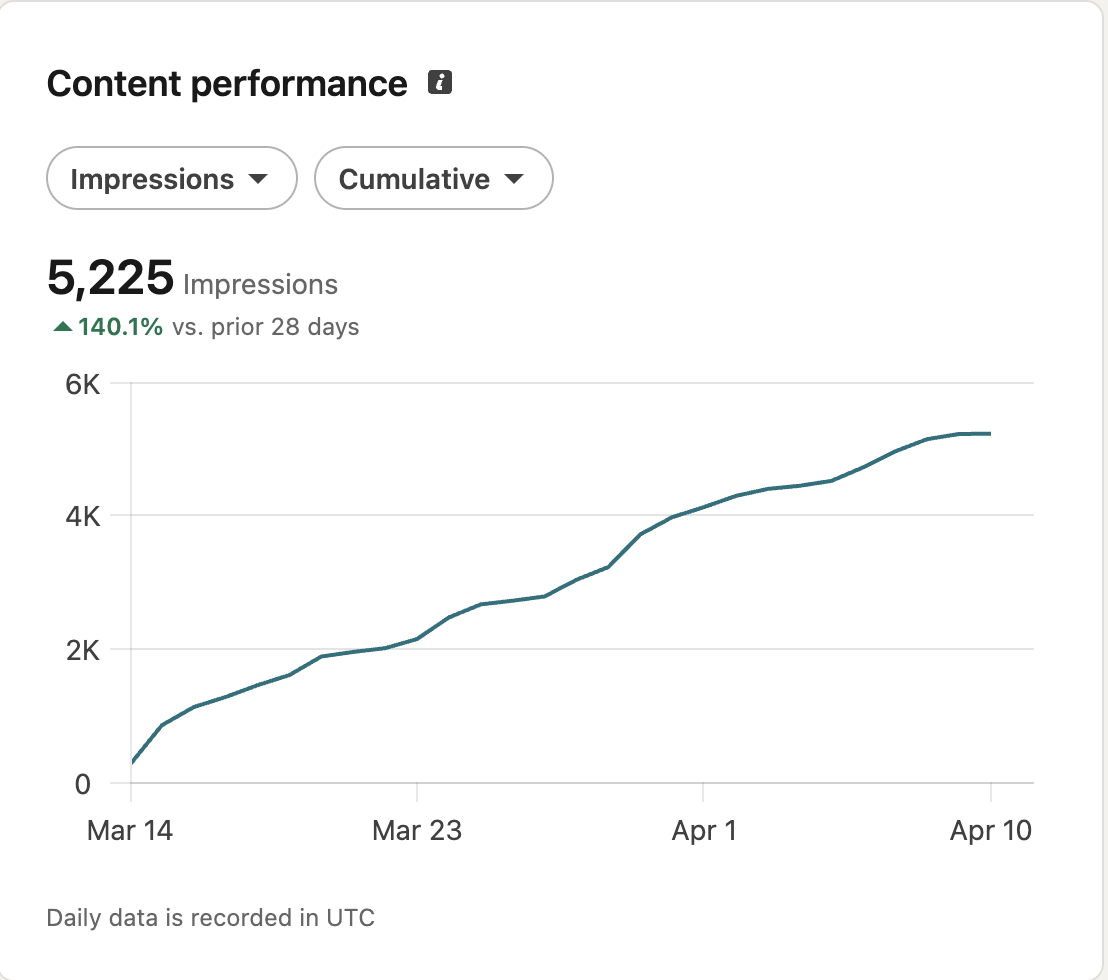

Engagement and impressions came in a couple percentage points lower than the OpenClaw period. I don’t think that’s the architecture - it’s probably post topic variance and outside factors. But I’m not going to pretend the writing snapped back immediately either.

The Final Math

| OpenClaw | n8n Rebuild | |

|---|---|---|

| Infrastructure | Included | $14/mo (self-hosted) |

| Token costs | ~$316/mo | ~$90/mo |

| Total | ~$330/mo | ~$104/mo |

| Daily avg token cost | ~$15/day | ~$3/day |

| Daily debugging | Near-daily | Rare |

| Reliability | Inconsistent | Consistent |

| Build time | ~1 week | ~2 weeks |

The Anthropic cost timeline tells the real story. OpenClaw averaged around $15/day with regular spikes above $20 - the agent loops running unchecked, retrying, wandering. The n8n period averages $3/day with no spikes. Flat and predictable. That’s not just cheaper - it’s a different behavioral profile.

~80% reduction in token costs day-to-day. The $14/mo hosting is in the total, sure, but I run a lot of other things on that instance - I wouldn’t pin it on this pipeline. If you’re already self-hosting n8n, the only number that matters is the token line. More stable. Reproducible. Similar creative output with more upfront prompt work.

Still worth it. I’d do it again.

So Which One Should You Use

OpenClaw isn’t dead to me. If I ran it again with cheaper models and tighter guardrails built in from day one, I think a lot of the cost and reliability problems are solvable. For genuinely open-ended work - the kind where you can’t draw a flowchart - it’s still the right tool.

But if cost is a concern - and it usually is - a structured automation approach will always win. n8n, a coded pipeline, whatever fits your stack. Use AI for the nodes that actually require judgment. Let the infrastructure handle everything else.

That test from Part 2 still stands: can you draw a flowchart of the process? If yes, the flowchart should be code.

Everything else is just an expensive cron job. And $90/month is still a lot for a cron job - it’s just a much more acceptable one.

One More Step

Something kept nagging at me through the whole n8n build: I was still working around a platform.

n8n is good software. But every workflow platform has a ceiling - things you can’t quite do cleanly, abstractions that don’t fit your data model, features you need to bolt on. Multi-tenancy is a good example. Running this pipeline for more than one account in n8n is possible. It’s also a headache I’d rather not have.

So I took the hypothesis to its logical end. If the flowchart should be code - why isn’t it just code?

I’m currently testing a full Python implementation. Postgres for the database, Redis and Celery for queued tasks, FastAPI as the API layer, Docker and Render for deployment. No platform dependencies. No working around someone else’s abstractions. The AI still only touches the same four creative nodes. Everything else is just Python.

Total control over the pipeline logic. Cleaner path to multi-tenancy. A few remaining infrastructure costs that disappear when you own the stack outright.

It’s functional and in early testing. Part 4 will have the real numbers - cost breakdown, effort comparison against the n8n build, and whether the extra control was actually worth the extra work.

My guess is it will be. But I’ve been wrong about estimates before.